I had this keyboard sitting around the house for a while and decided to modify it – specifically, change the switches to a silent one so I can use it in an office environment.

tl;dr It’s not as “hot-swappable” as it was advertised to be.

Switch compatibility

It seems to be only compatible with Outemu 3-pin switches. The electrical pins are narrower than usual, which fits the board.

I tried using an Akko 5-pin switch by cutting off the the two extra plastic pins on the sides, but it still didn’t fit well. The electrical pins and the center supporting stem are a little bit too thick. Even after shaving down the electrical pins, the switch does not sit flush with the top plate, so I’d advise to not use any 5-pin switches as it seems the dimensions are slightly off. Some other 3-pin switches may work, but I’m sticking with Outemu 3-pin for now.

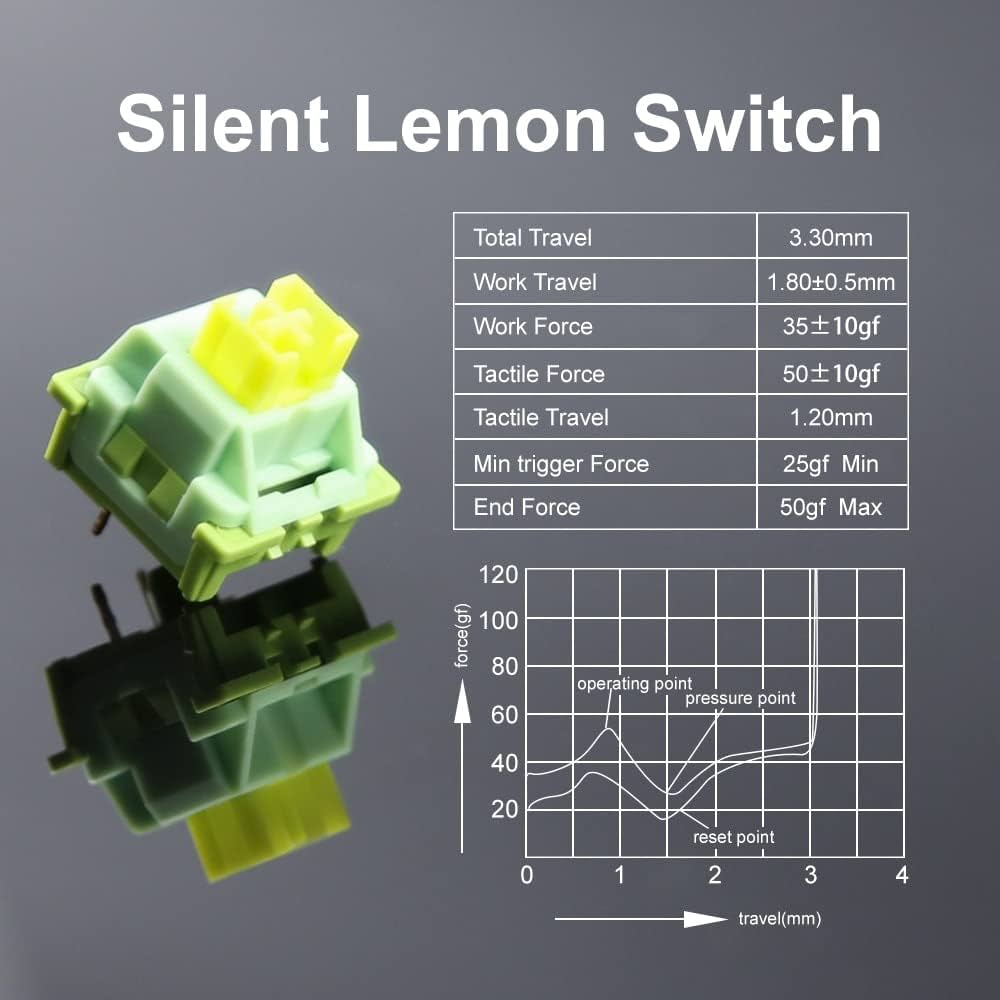

I went with the Outemu Lemon silent tactile 3-pin switches (NOT the V2 which are 5-pin), and they work great. They have a good amount of tactility and are very quiet. The only noise I have now are the rattling stabilizers which I will get to later.

Removing the old switches

Removing the old switches was a massive PITA. The cheaply-made sockets are inconsistent and some switches are very tightly seated; some pins have also corroded over time making it impossible to pull the switch out from the top with just a switch puller.

I found that the best way to remove all the switches was to unscrew the bottom cover and push the center stem out from the back while slowly prying the top/bottom of the switch up from the front using a small, flat screwdriver. This took me over an hour and a lot of elbow grease, and also damaged a dozen switches along the way (broken pins, damaged outer casing) – so be prepared to toss the old switches (which are crap anyway).

Installing new switches, reassembly

The switch installation process was straightforward. Since I had the keyboard apart, it was also good to ensure that every switch sat nicely on the board. The same problem with the socket exists during installation – some are tighter than the others, so pushing them while the case is apart ensures the board sits flat with the switches.

Extra dampening – painter’s tape

I also added two layers of painter’s tape (aka masking tape – I use a high quality one from 3M so it doesn’t leave sticky residue) over the bottom of the circuit board to add some extra dampening.

Stabiliser noise

After having extremely silent switches, the only noise you notice are rattling from the cheap stabilisers that can’t be replaced. There are only two stabilizers on this keyboard: spacebar and right shift key.

It seems the rattle is primarily from the hinge on the keyboard plate. Adding some dielectric grease can help reduce the noise. I didn’t have any, but would try it if I did.

Conclusion

I know this is a cheap keyboard, but I didn’t want to add it to the landfill so being able to reuse it for the office would be a great. The new pack of 90 switches costs me less than SGD $30 on Aliexpress, and is cheaper than buying another keyboard.

After typing (including this blog post) on the keyboard for a while, I must say I like the Outemu Lemon switches are much better than the original blue clicky ones which I felt were too noisy, wobbly and inconsistent.